Agentic Customer Support With Guardrails: Why Deterministic Workflows Beat Autonomous Agents

Emma Ke

on March 29, 2026CMO

11 min read

Agentic AI sits at the Peak of Inflated Expectations on Gartner's 2025 Hype Cycle for Artificial Intelligence. Meanwhile, 88% of organizations reported confirmed or suspected AI agent security incidents in the past year. The disconnect is staggering: enterprises are deploying autonomous AI agents into customer support at record speed while security, governance, and determinism lag behind.

The agentic AI customer support promise is real — AI that perceives, reasons, and acts on behalf of customers. But the implementation reality? Autonomous agents making unconstrained decisions in production environments, accessing data they shouldn't, and hallucinating actions that no human approved.

The fix isn't less AI. It's better architecture. Deterministic workflows with AI agent guardrails deliver the intelligence of agentic systems with the predictability of governed processes. That's what this post is about — and that's what OpenClaw was built to solve.

TL;DR

- Agentic AI is peaking — Gartner places AI agents at Peak of Inflated Expectations. Disillusionment follows for teams deploying autonomous agents without guardrails.

- 88% reported security incidents — Organizations using AI agents face real production risks when agents operate without architectural constraints.

- Deterministic workflows are the guardrail — Dual-handle routing (success/error), variable scopes, and audit trails provide agentic intelligence within governed boundaries.

- 68% cost reduction — AI interactions cost ~$1.45 vs $4.60 for human agents, but only when workflows prevent autonomous drift that triggers expensive escalations.

- Build once, deploy everywhere — One OpenClaw workflow deploys to WhatsApp, Slack, Messenger, Instagram, Telegram, Discord, and web with 175 million users messaging business accounts daily on WhatsApp alone.

The Agentic AI Customer Support Problem

The AI customer service market hits $15.12 billion in 2026, up 25% from 2024. 40% of enterprise applications will feature task-specific AI agents by end of 2026 — up from less than 5% in 2025. The market wants agentic AI customer support. The question is whether it can survive production.

Why Autonomous Agents Fail in Production Customer Support

Autonomous AI agents — the kind built with frameworks like LangChain, AutoGen, or CrewAI — are designed for maximum flexibility. They discover tools at runtime, select APIs dynamically, and make decisions without explicit human-defined paths. This works brilliantly in demos. In production customer support, it creates three critical failures:

Unpredictable routing. The same customer query can trigger different tool chains on different days depending on model temperature, context window contents, and available tools. A returns request that correctly calls the order API on Monday might attempt a database write on Tuesday.

Data boundary violations. Autonomous agents with broad tool access can traverse data boundaries that no human authorized. An agent tasked with checking order status might access payment records, customer notes, or internal pricing — not maliciously, but because the model determined it was "relevant."

Escalation amnesia. When autonomous agents fail and escalate to humans, the context of why they failed is often lost. The human agent starts from scratch, doubling resolution time and frustrating the customer.

These aren't theoretical risks. Enterprise AI agent deployments in 2026 show that only 21% of organizations have visibility into what their agents access, which tools they call, or what data they touch.

The 88% Security Incident Reality

The Gravitee State of AI Agent Security 2026 Report surveyed over 900 executives and technical practitioners. The findings are sobering:

- 88% reported confirmed or suspected AI agent security incidents in the past year

- 82% of executives believe their policies protect against unauthorized agent actions

- Only 21% have actual visibility into what agents can access

- Only 14.4% report AI agents going live with full security/IT approval

The gap between perceived security and actual security is where production incidents live. And in customer support — where agents handle PII, payment data, and account access — that gap is unacceptable.

As we documented in our analysis of autonomous agent security risks, the fundamental issue is architectural: autonomous agents make runtime decisions that security tools can only observe, not prevent.

AI Agent Guardrails as Architecture, Not a Bolt-On

The industry response to autonomous agent failures has been bolt-on guardrails: input filters, output validators, LLM firewalls, monitoring dashboards. These tools are valuable. They are also insufficient.

What Guardrails Actually Mean in Production

Effective AI agent guardrails aren't monitoring layers added after deployment. They're architectural constraints that define what an agent can do before it ever touches production:

Path constraints — every possible action the agent can take is explicitly defined. If a path doesn't exist in the workflow, the agent cannot take it. This eliminates the "creative problem-solving" that causes autonomous agents to access unauthorized systems.

Data scope constraints — variables are scoped to system, session, or visitor levels. An agent processing a returns request accesses session-level order data, not system-level configuration or other visitors' records.

Failure mode constraints — every blocking operation has a defined success path and error path. When an API call fails, the workflow routes deterministically — it doesn't ask the AI to "figure out what to do next."

Escalation constraints — human handoff triggers are explicit conditions, not AI judgment calls. Sentiment below threshold? Escalate. Confidence below threshold? Escalate. Topic in restricted category? Escalate.

Governed AI Agents vs Autonomous AI Agents

The distinction matters. Governed AI agents operate with full LLM intelligence — natural language understanding, contextual reasoning, multi-turn conversation — within deterministic boundaries. Autonomous AI agents operate with full LLM intelligence and full LLM unpredictability.

Governed AI agents (workflow-based):

- Execute actions only along defined paths

- Access data only within scoped boundaries

- Fail along predetermined error routes

- Generate complete audit trails

- Deliver consistent outcomes across identical inputs

Autonomous AI agents (framework-based):

- Discover and select tools at runtime

- Access any connected system the model deems relevant

- Fail in unpredictable ways requiring case-by-case debugging

- Generate logs but not deterministic audit trails

- May produce different outcomes for identical inputs

For agentic AI customer support in production, governed agents deliver the intelligence without the liability. This is the approach OWASP's 2025 LLM Top 10 implicitly recommends when it identifies "Excessive Agency" as a critical risk — constrain what agents can do, don't just monitor what they did.

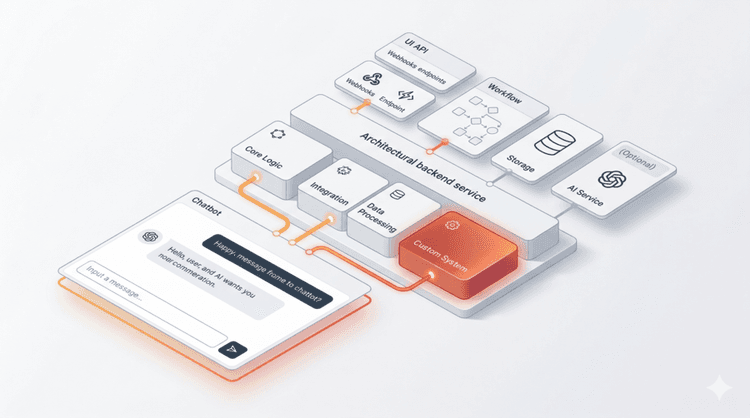

Deterministic AI Workflow Architecture for Customer Support

OpenClaw is Chat Data's deterministic AI workflow architecture. It provides 20+ node types with explicit routing, variable management, and multi-model flexibility — designed specifically for production agentic AI customer support.

Dual-Handle Routing as a Guardrail

Every blocking node in OpenClaw — API Call, Code Block, Validate Block — uses dual-handle routing: a success path and an error (or fail) path.

This isn't error handling as an afterthought. It's a deterministic AI workflow guardrail that eliminates the single most dangerous behavior of autonomous agents: improvising when something goes wrong.

Consider an order lookup workflow. The API Call node queries the order management system:

- Success handle (2xx response): Routes to a message node displaying order status, tracking information, and estimated delivery

- Error handle (4xx/5xx or timeout): Routes to a validation node that checks whether the error is transient (retry) or permanent (escalate to human agent)

With autonomous agents, a failed API call might trigger the model to attempt alternative endpoints, query cached data of unknown freshness, or generate a plausible-sounding but fabricated order status. With dual-handle routing, the error path is defined. The agent doesn't improvise. The customer gets an accurate response or a human — never a hallucination.

Variable Scopes for Data Governance

OpenClaw provides three variable scopes that function as data governance guardrails:

SYSTEM variables (read-only): Platform data like current timestamp, chatbot ID, conversation channel. Agents cannot modify system state.

SESSION variables (read-write, single conversation): Data collected during the current interaction — customer name, order number, selected options. Session variables expire when the conversation ends, preventing data leakage across interactions.

VISITOR variables (read-write, persistent): Data that persists across sessions for returning customers — customer tier, language preference, prior issue categories. Controlled persistence for personalization without unbounded data access.

This scoping prevents the data boundary violations that plague autonomous agents. A workflow processing a return cannot access another visitor's session data, system configuration, or internal pricing — even if the LLM "reasons" that such data would be helpful.

Multi-Model Selection Per Node

Not every task in a customer support workflow requires the same AI model. OpenClaw lets you select the model per node:

- Fast, cost-efficient models for intent classification and simple Q&A

- Reasoning models for complex escalation decisions, multi-step troubleshooting, and policy interpretation

- Extended context models for processing long conversation histories or large knowledge bases

This multi-model approach is both a cost optimization and a guardrail. Using a lightweight model for routine classification reduces the probability of overthinking simple queries — the agentic equivalent of using a calculator for arithmetic instead of asking a philosopher.

Building Agentic Customer Support With OpenClaw

Theory is useful. Let's look at how deterministic AI workflow architecture works in practice.

Workflow Example — Escalation With Sentiment Analysis

A governed escalation workflow in OpenClaw:

- AI Conversation Node (fast model): Classify customer intent and extract sentiment score. Store results in SESSION variables.

- Condition Node: Check sentiment score against threshold. Below -0.5? Route to escalation path.

- AI Conversation Node (reasoning model): For neutral/positive sentiment, generate a contextual response using knowledge base and session context.

- Validate Block: Verify response doesn't contain restricted content (pricing commitments, legal statements, PII). Success → deliver to customer. Fail → route to human handoff.

- Human Handoff Node (escalation path): Transfer full context — conversation history, sentiment scores, intent classification, variables collected — to live chat agent.

Every path is defined. Every failure has a handler. The AI operates with full natural language intelligence within deterministic boundaries.

Workflow Example — Order Status With API Validation

A production order lookup workflow:

- AI Capture Node: Extract order number from natural language input. Store in SESSION variable.

- Validate Block: Check order number format against regex pattern. Success → proceed. Fail → ask customer to re-enter.

- API Call Node: Query order management system with validated order number. Success (2xx) → parse response into SESSION variables (status, tracking, ETA). Error (4xx/5xx) → route to error handler.

- Condition Node: Check order status. If "delivered" → route to delivery confirmation flow. If "in transit" → route to tracking details flow. If "cancelled" → route to cancellation support flow.

- Message Node: Display formatted order information with tracking link.

No autonomous API discovery. No dynamic tool selection. No improvised responses when the API fails. The customer gets accurate information or appropriate escalation — every time.

Cost Comparison — $4.60 to $1.45 Per Interaction

Industry data for 2026 shows a human-handled customer service interaction costs roughly $4.60 on average, while an AI-handled interaction costs approximately $1.45 — a 68% reduction in cost per interaction.

But this 68% reduction only holds when AI interactions actually resolve the customer's issue. Autonomous agents that hallucinate, access wrong data, or fail unpredictably generate expensive re-contacts: the customer calls back, gets a human, and the company pays $4.60 on top of the failed AI interaction cost.

Deterministic workflows protect the cost reduction by ensuring AI interactions either resolve correctly or escalate cleanly. There's no middle ground where the AI confidently delivers wrong information and the customer discovers the error later.

Companies see an average return of $3.50 for every $1 invested in AI customer service. With governed workflows preventing costly re-contacts, that ROI compounds.

Omnichannel Deployment With Built-In Guardrails

A governed AI customer support workflow is only valuable if it reaches customers where they already are.

WhatsApp Business Compliance

175 million users message business accounts daily on WhatsApp, with open rates of 95-98% compared to 20-25% for email. WhatsApp Business API adoption has grown to over 5 million businesses globally.

WhatsApp automation with OpenClaw deploys the same governed workflow to WhatsApp with automatic platform adaptations — message formatting, media handling, and interactive element support — while maintaining all guardrails: dual-handle routing, variable scopes, and audit trails.

The guardrails travel with the workflow. A governance constraint defined once applies to every channel — no per-platform security configuration.

One Workflow, Every Channel

Chat Data deploys a single OpenClaw workflow to all supported channels simultaneously:

- Website Widget — embedded customer support

- WhatsApp Business API — the dominant business messaging channel

- Slack — internal employee support and IT helpdesk

- Discord — community engagement and support

- Facebook Messenger — social commerce and customer service

- Instagram DMs — direct customer engagement

- Telegram — international markets

- LINE — Asia-Pacific customer base

Platform-specific adaptations happen automatically. Markdown translation for Facebook platforms, URL signing for Discord and Slack, audio response conversion, interactive element formatting — all handled by Chat Data's unified message architecture.

Build one governed workflow. Deploy everywhere. Every channel inherits the same guardrails.

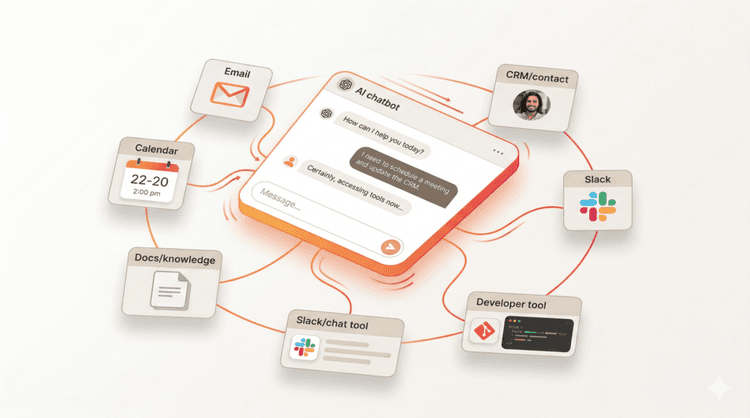

Human Handoff — The Guardrail That Keeps Customers Happy

The most important guardrail in agentic AI customer support isn't technical — it's the ability to seamlessly transition to a human when the AI reaches its limits.

Context Continuity as the #1 Success Factor

The reason customers hate escalation isn't the wait time — it's repeating themselves. When an autonomous agent fails and "escalates," the human agent often starts from scratch because the AI's reasoning path was opaque.

Chat Data's workflow-based human handoff solves this by transferring structured context:

- Conversation history: Every message exchanged between customer and AI

- Variables collected: Order numbers, account details, issue categories, sentiment scores — all SESSION and VISITOR variables

- Workflow path taken: Which nodes executed, which conditions evaluated, where the escalation triggered

- AI reasoning: The model's classification, confidence scores, and response attempts

The human agent sees exactly what the AI tried, why it escalated, and what the customer has already provided. No repeat questions. No context loss. Resolution from the first human interaction.

This isn't a nice-to-have. Research consistently shows that having to repeat information is among the top customer frustrations in support interactions. Context continuity in handoff is a guardrail that protects customer experience.

From Disillusionment to Production Reality

The agentic AI hype cycle is running hot. AI agents sit at the Peak of Inflated Expectations, and like generative AI before it, the trough of disillusionment is coming. Teams that deployed autonomous agents without guardrails will face the same reckoning: inconsistent results, security incidents, compliance failures, and customer experience degradation.

But disillusionment doesn't mean the technology is wrong. It means the implementation was. Agentic AI customer support works — when it's built on deterministic workflow architecture with proper guardrails.

The market reality:

- $15.12 billion AI customer service market in 2026, growing at 25% annually

- 40% of enterprise apps will feature AI agents by end of 2026

- 88% of organizations reported AI agent security incidents — the governance gap is real

- 68% cost reduction per interaction with governed AI workflows

- 175 million daily users messaging business accounts on WhatsApp alone

The businesses that navigate the trough successfully will be those that chose governed intelligence over autonomous hope. They'll deploy OpenClaw workflows with dual-handle routing, variable scopes, multi-model selection, and omnichannel deployment — agentic AI customer support that actually works in production.

Start building governed AI customer support today — Chat Data's OpenClaw workflow platform delivers agentic intelligence with deterministic guardrails. As the industry learned from the evolution from rule-based chatbots to AI agents, the winning architecture isn't the most autonomous — it's the most governed.