Custom LLM Chatbot: Bring Your Own Backend for AI Chat

Emma Ke

on April 3, 2026CMO

7 min read

Key takeaways

- A custom LLM chatbot appeals to teams who need more architecture control than a default hosted flow provides.

- The main tradeoff is flexibility vs. speed: custom backends unlock routing, privacy, and observability but add complexity.

- Most teams do not need a fully custom model-serving stack -- preserving your business logic and tool layer is often enough.

- Related guides: chatbot SDK and AI workflow automation.

What is a custom LLM chatbot?

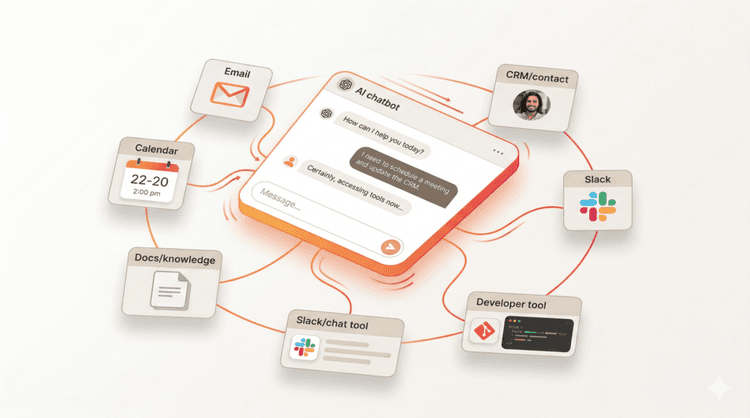

A custom LLM chatbot is a chatbot where the team controls more of the model and backend layer instead of treating the assistant as a fixed black box. That control may include model routing, tool orchestration, backend services, access rules, or how user context is attached during the conversation.

This article is for technical founders, product teams, and engineering leads asking a more specific question than “can I add a chatbot?” They are asking whether they can preserve architectural flexibility while still moving fast.

Why teams ask for a bring-your-own-backend chatbot

Most teams do not want a custom backend because they love complexity. They want it because one or more of these requirements becomes important:

- model flexibility

- backend business logic

- privacy controls

- custom observability

- account-aware actions

- integration with internal systems

In other words, the request is rarely about novelty. It is about control.

It is also about tool architecture. OpenAI’s tool reference makes it clear that modern assistant stacks can work with function calls and file search rather than plain text generation alone, which is part of why more teams now think in terms of backend orchestration and action layers instead of standalone chat widgets (OpenAI function calling guide).

When a default chatbot setup is enough

A standard hosted chatbot setup is still a strong fit when:

- you mainly need FAQ or documentation answers

- the chatbot is public-facing

- the workflows are relatively simple

- speed matters more than architecture customization

That is why not every chatbot project should jump to a custom backend. Complexity is only worth it when it unlocks a real product or operational advantage.

When a custom LLM chatbot becomes the better fit

1. You need model routing flexibility

Some teams want to switch models by use case, latency target, cost, or customer segment. That usually pushes them toward a more customizable architecture.

2. You need tighter privacy and control

If the chatbot must operate under stricter data policies, custom context handling and backend logic can matter more than a default setup.

3. You need deep workflow integration

A custom backend is often useful when the chatbot has to interact with several internal systems, apply business rules, and maintain a clear audit path.

4. You are embedding chat into a product

The closer the chatbot gets to your core product UX, the more likely you are to care about architecture, latency, permissions, and account context.

What “bring your own backend” really means

Teams often use this phrase loosely. In practice, it can mean several different things:

- using your own APIs for action handling

- controlling the system that passes user context into the chatbot

- routing different prompts or tasks through different model logic

- attaching the chatbot to internal tools, state, and analytics

The phrase sounds technical, but the business reason is simple: your chatbot needs to behave like part of your product, not a disconnected plugin.

That distinction matters for accuracy. This article should not imply that every buyer needs a fully custom model-serving stack. In many cases, “bring your own backend” really means preserving your business logic, tool layer, and data flow while using a platform for the chat experience, orchestration, or deployment surface.

How Chat Data supports custom LLM chatbot architectures

If you want to keep backend flexibility without building everything from scratch, Chat Data offers several integration points that matter for custom LLM chatbot workflows:

- chatbot SDK for embedding chat into your own product surface

- AI workflow automation for connecting business logic, APIs, and routing

- white label chatbot for branded deployment across client accounts

Here is what that looks like in practice:

- the Chat Data SDK changelog positions embedded chat as part of your own website or product

- AI workflow automation shows how business logic, APIs, and routing can remain part of the solution

- the MCP integration changelog adds 834 apps and 10,000+ tools as action surfaces

- chatbot-specific API keys help teams isolate access when the backend needs tighter operational boundaries

Questions a serious buyer will ask

If someone is evaluating a custom LLM chatbot approach, they usually want answers to these questions:

How much backend logic can I keep?

They want to know whether existing systems, routing logic, and APIs can remain in place.

Can I choose how the model is used?

They want flexibility in prompting, tool usage, or backend orchestration rather than a rigid preset.

What happens to observability and control?

They want to preserve debugging, logging, analytics, and operational clarity as the assistant becomes more capable.

Can this still move fast?

A custom LLM chatbot only makes business sense if it keeps enough flexibility without turning into a long infrastructure project.

A practical decision rule

Use a default hosted chatbot setup when you mainly need content answers, fast deployment, and a public-facing assistant.

Move toward a more custom LLM chatbot architecture when you need:

- model or prompt routing by use case

- protected actions tied to user identity

- business logic that lives in your own backend

- stronger observability and operational control

- action-taking assistants that interact with multiple systems

The right choice depends on what your product actually requires, not on whether "custom" sounds more impressive.

Related resources

If you are evaluating a custom LLM chatbot approach, these guides cover related topics:

- Chatbot SDK -- embed AI chat into your product with a single-line script

- AI workflow automation -- connect chatbot conversations to APIs, tools, and business logic

- What is an MCP chatbot? -- understand how Model Context Protocol adds a tool layer to chat

- How to add user authentication to a chatbot -- design secure, account-aware assistant experiences

FAQ

What is a custom LLM chatbot?

A custom LLM chatbot is a chatbot with a more flexible model and backend architecture, often allowing teams to keep control over routing, tools, context, or backend actions.

Do I need my own backend to build a serious chatbot?

Not always. Many chatbots work well with a standard platform setup. A custom backend becomes more useful when the assistant needs deeper product integration, privacy control, or complex business logic.

How much does a custom LLM chatbot cost to build?

It depends on how much of the stack you own. Using a platform like Chat Data with SDK and workflow support reduces the build scope significantly compared to building model serving, chat UI, and orchestration from scratch.

Sources and implementation references

- OpenAI function calling guide

- Chat Data SDK changelog

- Chat Data MCP integration changelog

- Chatbot-specific API keys changelog

- AI workflow automation

Conclusion

A custom LLM chatbot is most relevant when a team needs architectural control, not just a chat interface. The decision is not build-vs-buy in absolute terms. It is about how much backend flexibility you keep while still moving fast.

If you are evaluating this approach, start with chatbot SDK for embedding and AI workflow automation for connecting your backend logic to the conversation layer.